And IBM’s top brass in the Power Systems business agrees, and thinks that a Power9-Volta combination with NVLinks not only between the GPUs, but hooking the GPUs to the CPUs too will result in better computational efficiency. This efficiency, we think, is at least partly limited by the PCI-Express 3.0 links between the Xeons and the GPUs, and the CPUs and the EDR InfiniBand adapters in the system.

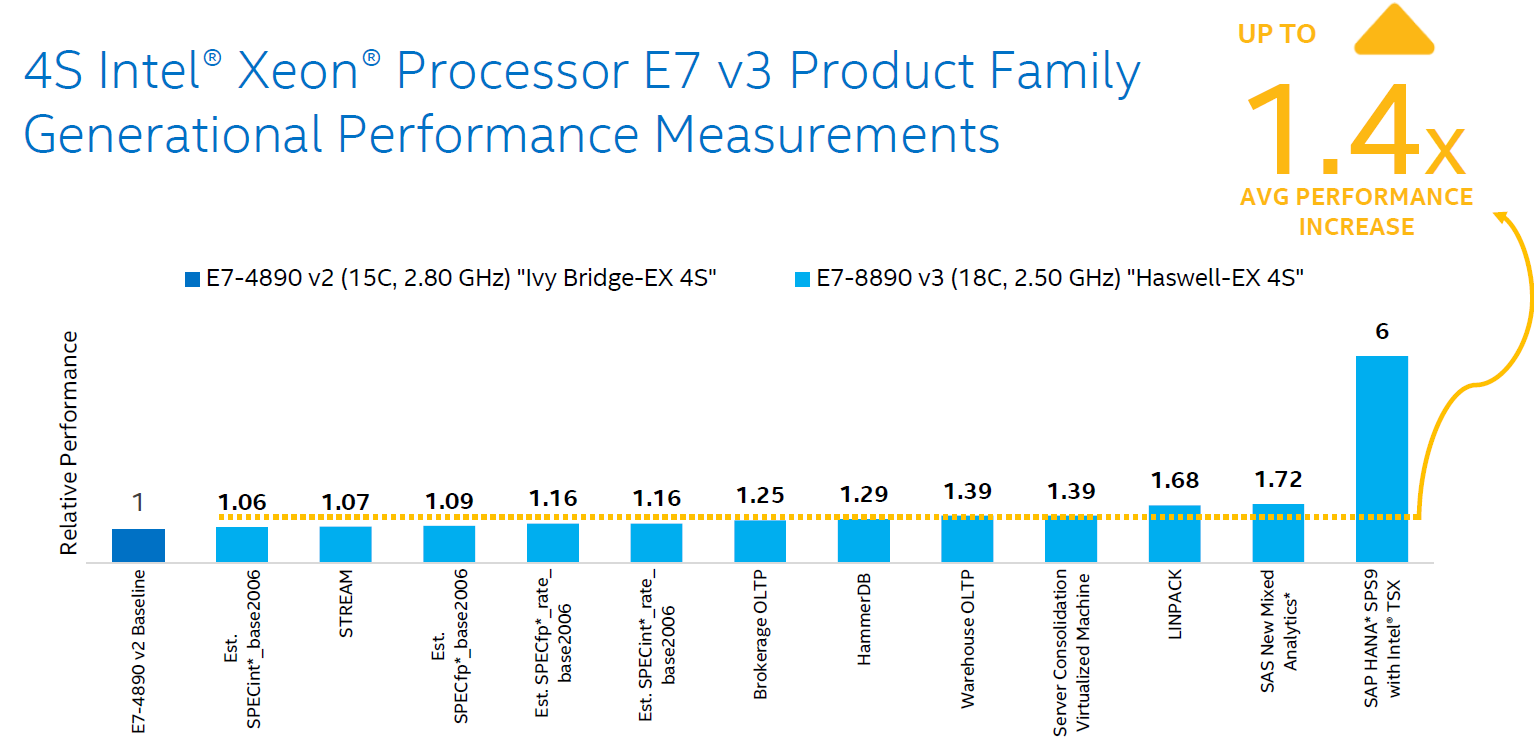

That 33 nodes system only burned 97 kilowatts, however, and yielded a very impressive 15.1 gigaflops per watt on Linpack. In that test, 33 nodes of the second-generation Saturn V machine had a theoretical peak performance of 1.82 petaflops at double precision, and yielded 1.07 petaflops on the Linpack test, for a computational efficiency of 58.8 percent. This is not an optimal configuration, since the latest NVLink has more bandwidth and lower latency than PCI-Express 3.0. The V100s are all cross connected in a 2D hybrid cube mesh to each other using NVLink 2.0 ports, but the GPU complex is linked to the Xeon CPUs through a pair of PCI-Express 3.0 switches and the PCI-Express 3.0 controllers on the Xeon dies. That machine will eventually have 660 of its DGX-1V compute nodes, which have two Xeon E5 processors and eight of the Tesla V100 accelerators. Nvidia ran the Linpack Fortran matrix math benchmark test on a prototype chunk of its second-generation “Saturn V” AI supercomputer, which it is installing early next year. There is a lot of work to be done here, as performance results on hybrid machines mixing Intel Xeon CPUs and Nvidia “Volta” Tesla V100 GPU accelerators show.

LINPACK BENCHMARK MULTINODE SOFTWARE

For the large HPC and AI systems that companies are installing now and throughout 2018, tuning up the system and application software to better exploit the hardware will be key, particularly on new platforms from Intel, IBM, and AMD, which are vying for share in these areas.

LINPACK BENCHMARK MULTINODE SERIAL

This is the case with any symmetric or asymmetric processing complex, where the interconnect and the method of dispatching work across the computing elements is crucial, and in modern hybrid systems that might tightly couple CPUs, GPUs, FPGAs, and memory class storage on various interconnects, the links could end up being as important as the compute.Īs we have discussed previously, IBM’s new “Witherspoon” AC922 hybrid system, which was launched recently and which starts shipping next week, is designed from the ground up to have heavy Power9 serial compute and much heavier parallel Nvidia GPU compute, all coupled with NVLink interconnects and cache coherency across their respective memory, with very fast PCI-Express 4.0 CAPI and even faster Bluelink OpenCAPI ports to link to FPGAs and memory class storage, with high memory bandwidth on the CPUs and GPUs to keep their hungry processor threads fed with data and instructions.ĬPUs, GPUs, and FPGAs are not cheap devices, so pushing up the performance closer to peak is vital because it is the same thing as buying less iron to do the same job. The differences between peak theoretical computing capacity of a system and the actual performance it delivers can be stark.